KEYWORDS: Teplice, Rumburk, church music, composers, choir masters, 19th century, Deutschböhmen

Mgr. Ludmila Mikulášová, Ph.D. / Národní knihovna České republiky (National Library of the Czech Republic), Mariánské náměstí 190/5, 110 00 Praha 1

]]>KEYWORDS: Teplice, Rumburk, church music, composers, choir masters, 19th century, Deutschböhmen

Mgr. Ludmila Mikulášová, Ph.D. / Národní knihovna České republiky (National Library of the Czech Republic), Mariánské náměstí 190/5, 110 00 Praha 1

]]>KEYWORDS: digital libraries, copyright law, artificial intelligence, text and data mining, legal licenses, machine translation, automatic summarization, Czech Republic

JUDr. Bc. Lucie Smolka, Ph.D. / Lawado | law of ideas, Táborská 2370/189, 615 00 Brno

Mgr. Jana Hrzinová / Národní knihovna České republiky, Mariánské náměstí 190 /5, 110 00 Praha 1

Mgr. Václav Jiroušek / Národní knihovna České republiky, Mariánské náměstí 190/5, 110 00 Praha 1

Mgr. Lenka Maixnerová / Národní knihovna České republiky, Mariánské náměstí 190/5, 110 00 Praha 1

]]>KEYWORDS: bees, beekeeping, entomology, early printed books, beehive, honey, National Library of the Czech Republic, provenance

Mgr. Markéta Bendlová / Národní knihovna České republiky (National Library of the Czech Republic), Mariánské náměstí 190/5, 110 00 Praha 1

]]>KEYWORDS: transcription models, historical manuscripts, transcription of Czech documents, transcription of Slovak documents, Transkribus platform

prof. PhDr. Dušan Katuščák, PhD. (ORCID 0000-0001-7444-1077), Mgr. Klára Pohlová, Bc. Lukáš Němec, BcA. et Bc. Vojtěch Říha / Slezská univerzita v Opavě, Filozoficko-přírodovědecká fakulta, Ústav bohemistiky a knihovnictví (Silesian University in Opava, Faculty of Philosophy and Science, Institute of the Czech Language and Library Science), Masarykova třída 343/37, 746 01 Opava

]]>KEYWORDS: disinfection, library, stamps, ink pencil, paper, conservation, butanol vapors, fixation

Introduction

Books and other types of library documents include various inscriptions and handwritten annotations, records of ownership, notes by readers, call numbers, and stamps of the document’s current and past owners, in addition to their texts. Such supplementary records are an important source of information about the book, its previous owners, readers, and the location where the book was created and stored. Especially, it is necessary to preserve library records without any changes. Such notes are not always made using durable, stable inks or dyes. This also applies to stamp inks. Currently, archival-quality inks and stamp inks, which are intended to be permanent, are preferred for registration records. However, very unstable ink pencils were used in the past, even in historical collections, reacting with a wide range of solvents of both polar and non-polar nature. The protection and eventual fixation of such records is an important part of any conservation or restoration actions on a book. Any possible dissolution and activation of a writing substance will not only make it impossible to identify the item in library records, but also means an irreversible damage to the document itself.

In the field of document disinfection, alcohols are one of the oldest antiseptic agents effective in high concentrations against a wide range of bacteria, fungi, and also against many viruses. The mechanism of action of alcohols on filamentous fungi consists in the coagulation of proteins in cell walls and cytoplasmic membrane. Alcohols also increase fluidity of lipids in cytoplasmic and mitochondrial membranes of fungi. This results in breakdown (lysis) of the outer cytoplasmic membrane, release of cell contents, and coagulation of enzymatic proteins (Karbowska-Berent et al., 2018). Some attempts to apply alcohols to decontaminate historical documents have been carried out in the past using various application methods and different concentrations. Aqueous alcohol solutions have been applied by spraying, immersion, and surface rubbing. A high efficiency of their vapours has also been demonstrated, which have proven to be very gentle on materials subject to treatment. It was found out that under certain conditions (enclosed compartment, 96% butanol solution, exposure time of 48 hours, temperature of 25 °C) both vegetative forms of fungi and their spores are eliminated (Orlita, 1991). However, Karbowska-Berent (2014) found unacceptable changes in some coloured media (print, ballpoint pens) when alcohol is applied by immersion. Bronislava Bacílková (2006) made similar observations in the National Archives‘ laboratories regarding pens, permanent ink/indelible pencils and markers, and established that with some types of writing materials, the text can dissolve or even bleed through to the paper’s reverse side. However, such orientation tests were only some auxiliary tests conducted while testing the effects of alcohol vapours on fungi. For this reason, further investigation was required to determine the stability of annotations, ink pencils and stamps and other recording media from a wide range of media (which we encounter in library documents) in butanol vapours, which have been used for many years in the National Library of the Czech Republic as a standard disinfectant for contaminated collections.

Experimental part

Objective of the work:

Alcohols, especially butanol, have been used for disinfection for a very long time. The recommended concentration varies between 50 and 90%, depending on specific conditions. Alcohol is used in the form of vapours to disinfect books and archival materials, as this application form has a very gentle effect on the materials being treated. However, with some writing materials, especially ink pencils, the text may dissolve and the colours may change. As mentioned above, preliminary tests of the solubility of writing materials have already been carried out in the past in the National Archives (Bacílková, 2003). The main objective of our work was to monitor visual changes in recording media, such as ink pencils and stamp inks, following exposure to butanol vapours under conditions set for the disinfection of library collections infected with fungi. Changes in the colour of the prepared samples were measured and any colour migration was documented by macro- and micro-imaging.

List of recording media:

Twelve different recording media (see Table 1) from the 1970s to 1980s were tested, including ink pencils (samples 1–8), stamp inks (samples 9, 11, 12) and fountain pen ink (sample 10). Red ink pencils are indicated by the index “a” in all the following graphs and tables, while blue ink pencils are indicated by the index “b”.

Ink pencils – a pencil lead in a wooden casing, pencil leads of different compositions and colours: classic silver, purple, red, and blue. The main component of ink pencils include water-soluble organic dyes, mostly anionic, such as Methyl Violet (C.I. Basic Violet 1), Malachite Green (C.I. Basic Green 4) or acid dyes such as eosin (C.I. Acid Red 87) (Ďurovič et al., 1999).

Stamp inks can be divided into metal and rubber stamp inks. Stamp inks are applied to paper by pressing a rubber or metal stamp immersed in a dye. Oil-free rubber stamp inks have been tested, which consist of cationic dyes dissolved in a mixture of water, glycerine or higher glycols and alcohols. In contrast to metal stamps, which have an oil composition that is insoluble in water, oil-free stamp inks are more or less readily soluble in water. Violet, blue and black stamp inks contain cationic dyes, while red and green stamp inks contain anionic dyes. Blue ink was mainly produced from basic arylmethane dyes (Basic Blue 11, Basic Blue 26, and Basic Blue 52, formerly Basic Violet 1, Basic Violet 3, and Basic Blue 9). Black paint was most often produced from stable nigrosine dyes (Ďurovič, 2002) (Maková, 2019).

Fountain pen ink is a mixture of synthetic tar dye in distilled water, preservatives (phenol, formaldehyde), and pH adjusters (acetic acid, sodium carbonate). An anionic red ink was tested, probably made of the bluish xanthine dye eosin B (Acid Red 91). Red fountain pen ink from around the 1980s (sample no. 10) was used to replace red stamp ink from the same period of time (Ďurovič, 2002).

The exact chemical composition of the recording media was determined for samples 1, 2, 8a and 8b (see Table 1) by LC/MS analysis performed at the Central Laboratory of the Institute of Chemical Technology, Prague. The analyses were performed on a high-resolution LTQ Orbitrap Velos (Thermo Scientific) mass spectrometer in several ionization modes: ESI+ (electrospray ionization in positive mode), ESI− (in negative mode), APCI+ (atmospheric pressure chemical ionization in positive mode) and APCI−. The extracted solutions of the recording media were injected into the mobile phase stream (methanol) via a 10 µl injection loop (Rheodyne).

Based on the results of the analysis, it was found that the Hardtmuth Koh-I-Noor silver ink pencil (sample no. 1) contains dyes based on a mixture of Methyl Violet 10B, 6B, and 2B. In the Mephisto ink pencil (sample no. 2), a dye composed mainly of Methyl Violet 10B was identified. The Hardtmuth Koh-I-Noor red ink pencil (sample no. 8a) contains the Solvent Red 43 dye, while the Koh-I-Noor blue ink pencil (sample no. 8b) shows the presence of the Acid Blue 93 dye.

Table 1 List of recording media used

| Set number | Recording medium: |

|---|---|

| 1 | Ink pencil Hardmuth Koh-I-Noor silver |

| 2 | Ink pencil Hardmuth Koh-I-Noor Mephisto purple |

| 3 | Ink pencil Hardmuth Koh-I-Noor COP 1561 Hard silver |

| 4 | Ink pencil Hardmuth Koh-I-Noor Versatil 5205 silver |

| 5 | Ink pencil L.C Hardmuth Mephisto COP 73B Medium silver |

| 6 | Ink pencil Bohemia Works Bluestar COP 2726 Soft silver |

| 7 | Ink pencil SUNPEARL 3453 red/blue |

| 8 | Ink pencil Hardmuth Koh-I-Noor COP 1561 E/G red/blue * |

| 9 | Stamp ink for textile NORIS 325 black |

| 10 | Ink for fountain pens Koh-I-Noor MSP 4201 red |

| 11 | Oil-free stamp ink for rubber stamps GAMA JK 738 341 blue |

| 12 | Oil-free stamp ink for rubber stamps J.P.K CHEM JK 738 341 blue |

Work procedure:

Test 1: Simultation of Disinfection of Freshly Applied Inks

In the first test, the conditions of disinfection in butanol for freshly applied inks were investigated. For this purpose, twelve sets of samples were prepared. Each set contained six samples with inscriptions made using one of the recording media on handmade paper. The list of the recording media is given in Table 1. The samples of the recording media were applied to handmade paper from Velké Losiny, with a grammage of 240 g/m2. This is a hand-drawn graphic paper without transparency. The paper is made of a mixture of linen and cotton. The dimensions of the paper samples were 5 × 2.5 cm. The inscription “2023” was written on each sample with the selected recording media and a solid circle with an approximate diameter of 1 cm was created. Each sample was further marked with the sample number and set number (see Fig. 1). Rubber stamps were used to apply the stamp colours.

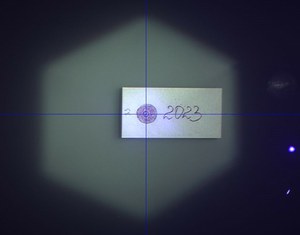

Fig. 1 Photograph of a sample taken with VSC 8000 in incident visible spectrum lighting (A. Kazanskii, National Library of the Czech Republic)

In the recording media sample sets prepared, their colour was measured in the spectrophotometer mode of the VSC 8000 (Foster + Freeman) video spectral comparator, hereinafter referred to as "VSC". The colours were analysed in a solid circle in three places with the calculation of the average values of colour change (see Fig. 2). Subsequently, individual samples were photographed using VSC in incident visible spectrum lighting (see Fig. 1).

Fig. 2 Sample colour measurement performed using VSC in spectrophotometer mode (A. Kazanskii, National Library of the Czech Republic)

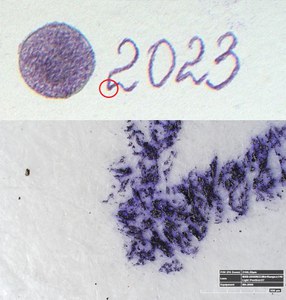

The microphotographs of the recording media were taken using a Hirox RH-2000 3D digital microscope with the MXB 2500 lens at a mid-range 200× magnification and a light position of 34–32. The microphotographs were taken in the same area for all samples, at the bottom edge of the first number "2" of the inscription "2023" (see Fig. 3).

Fig. 3 Macro- and microimages of the sample made with the VSC and the Hirox RH-2000 3D digital microscope (A. Kazanskii, National Library of the Czech Republic)

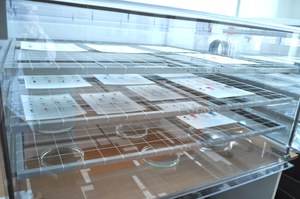

All sets of samples were subsequently exposed to vapours of 94–96% 1-butanol solution (Penta s.r.o.) with water, in a hermetically sealed ARTWET disinfection chamber. The evaporation of the aqueous alcohol solution was ensured by open Petri dishes with a butanol solution, which were placed at the bottom of the disinfection box. The amount of solution corresponded to the volume of 900 ml of aqueous solution per 500 dm³ chamber. The samples were placed on cardboard, which simulated the cover of a book. They were placed on three retractable silicone meshes at distances of 14, 26, and 38 cm above the Petri dishes (see Fig. 4 and 5). The simulated disinfection process itself took place for 48 and 72 hours. Each sample in the set (a total of 6 samples) corresponded to specific disinfection conditions, particularly the combination of distance from the butanol source and the duration of action. Throughout the process, the temperature and relative humidity were monitored both inside the chamber and in the room.

Fig 4 Samples of ink pencils and stamps on cardboard in a hermetically sealed disinfection box (R. Zembjaková, National library of the Czech Republic)

Fig 5 A more detailed view of samples of ink pencils and stamps on cardboard in a hermetically sealed diinfection box (R. Zembjaková, National Library of the Czech Republic)

Test 2: Simulation of Disinfection of "Activated" Inks

To simulate the disinfection of "activated" inks, eight sets of samples containing all types of water-soluble ink pencils (sets 1-8, see Table 1) were prepared. During the application of the media to the paper, a small amount of distilled water was added locally to increase the reactivity of the inks. In all other respects, the procedure for preparing and disinfecting samples remained the same as in the first test. The aim of the activation was to partially simulate the application of a moistened ink pencil nib, the aging of the ink and its response to changes in external relative humidity over time. This test is for reference only and is used to observe the ink in different states. The application procedure described cannot be considered artificial aging.

Test 3: Simulation of Disinfection of Recording Media Fixed with Cyclododecane

Twelve sets were prepared to simulate the disinfection of fixed recording media (see Table 1). In this test, ink fixation was performed by applying a cyclododecane solution, followed by applying molten cyclododecane (Paulusová, 2000). A solution of cyclododecane/petroleum benzine was prepared by dissolving 10 g of cyclododecane in 8 g of petroleum benzine while stirring at normal laboratory temperature. The solution was applied using a small brush to the samples from both sides. Molten cyclododecane was prepared by dissolving cyclododecane in a solder attachment while maintaining a constant temperature of 70 °C. The molten material was applied with a small brush on both sides of the paper, creating a visible crust. In all other details, the sample preparation and disinfection procedure remained the same as in the first test.

Test 4: Simulation of Disinfection of Recording Media Fixed with Mesitol and Rewin Solutions

Twelve sets of samples were prepared to simulate the disinfection of fixed recording media (see Table 1). Ink fixation in Test 4 was carried out using the application of 1.2% Mesitol NBS solution and 6% Rewin EL solution (Bredereck 1988). The Mesitol NBS anionic agent was prepared by dissolving powdered Mesitol under constant stirring in deionised water. The liquid concentrate of the Rewin EL cationic agent was diluted to a 6% aqueous solution. The solution was applied by the immersion method, which proved to be the most gentle option for the recording media in this test.

For anionic agents such as ink pencils and fountain pens (sets 1-8 and 10, see Table 1), the samples were first immersed in the Rewin solution. After drying, they were immersed in the Mesitol solution. For cationic stamp inks, the procedure was reversed: the samples were first immersed in the Mesitol solution and then in the Revin solution. Finally, all the samples were thoroughly dried.

In all other aspects, the sample preparation and disinfection procedure remained the same as in the first test.

Results and Summary

Disinfection Temperature and Humidity

During the experiment, the room temperature ranged between 22.0 and 23.6 °C, and the relative humidity between 23.9 and 31.8 %. The temperature in the hermetically sealed box reached approximately the same values as in the room, but the relative humidity gradually increased, up to 79% in 40 hours (see Fig. 6). The minimum time required for effective disinfection is 48 hours, but the risk of activation of recording media, binders, and undesirable changes may increase.

Fig 6 Development of relative humidity in a hermetically sealed box in standard 48-hour disinfection procedure

Results of test 1:

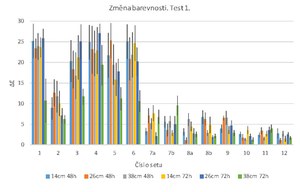

When measuring the colour changes of the samples, the results were expressed through the coefficient of overall colour change ∆E, which is a generally accepted indicator for monitoring colour differences. For better orientation, a scale was set to determine the degree of difference between two colours. Colour changes of ∆E less than 0.2 are considered negligible, changes ranging from ∆E from 0.2 to 0.5 are considered very small, changes ranging from 0.5 to 1.5 are considered small, changes ranging from 1.5 to 3 are considered clearly perceptible, changes ranging from 3.0 to 6.0 are considered medium, and ∆E above 6 indicates a high colour difference (Zmeškal 2002).

In the first test, the ∆E values for silver ink pencils (sets 1-6) exceeded 10, while for the purple Mephisto ink pencil they amounted to about 8. This indicates that there were significant and clearly visible changes in colour due to exposure (see Fig. 7). Such changes, including ink bleeding, are clearly visible in the macro- and micro-photographic documentation (see Table 2). The change in colour after 72 hours of exposure was dependent on the distance of the samples from the source of butanol, with the lowest values being achieved at a distance of 38 cm. The main effect that can be observed on microphotographic documentation is significantly lower degree of the medium’s bleeding. Water-soluble polar organic dyes are the main ingredient in silver ink pencils. The reason for such significant changes in colour and bleeding can be both high relative humidity and high concentration of butanol in the atmosphere during disinfection.

Minor colour changes were observed in the case of coloured ink pencils (sets 7-8), with the ∆E value reaching approximately 6, which corresponds to a moderate colour change (see Fig. 7). No bleeding was found. This suggests that the composition of the selected blue and red ink pencils shows higher resistance to water solubility and butanol vapours.

Stamp ink from set 9 showed significant ink bleeding during exposure in areas with a higher layer of ink. The intensity of such bleeding was dependent on the distance of the samples from the butanol source (see Table 2).

For liquid fountain pen inks and stamp inks (sets 10-12), even minor colour changes were recorded, with the ∆E value ranging from 1.5 to 3. There was also no bleeding of the recording media during the test, indicating a high resistance of such media to water solubility and butanol vapours (see Fig. 7).

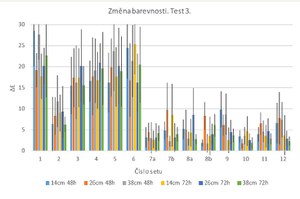

For clarity, all tables with photographic documentation show three states of the samples: 1) state before disinfection, 2) state after disinfection with a minimum exposure time of 48 hours and a distance of 14 cm from the butanol source, 3) state after disinfection with maximum exposure time and distance.

Fig. 7 Overall colour change ∆E of all sets in Test 1 as a function of exposure time and distance from the butanol source. Exposure time: 48/72 hours. Distance from butanol sources: 14/26/38 cm.

Table 2 Comparative macro- and microimages of Test 1 samples made using the VSC (upper part of the images) and the 3D digital microscope. Comparison of pre-exposure samples with post-exposure results. For the demonstration, exposure times of 48/72 hours and distances from butanol sources of 14/38 cm were selected. (A. Kazanskii, National Library of the Czech Republic)

Results of Test 2:

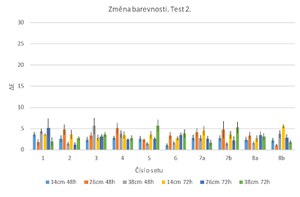

Already during sample preparation and the reaction of the colour component of the inks with water, all ink pencils (sets 1-6) underwent a significant colour change, with the ∆E value exceeding 10. On the other hand, blue and red ink pencils (sets 7-8) retained their original hue.

After the second test was conducted on all "activated" silver and coloured ink pencils (sets 1-8), the values of the overall colour change ∆E did not exceed ∆E = 5.7, regardless of the exposure time and the distance of the samples from the butanol source (see Fig. 8). This means that there were visible to invisible changes in colour as a result of exposure. The main change was in the slight bleeding of the ink, which is particularly evident at the edge of the record‘s trace on the microphotographic documentation, especially in silver ink pencil samples (see Table 3). In this test, we can assume that the relative humidity did not affect the change in the colour of those samples that had previously undergone a reaction with water. The main factor in the changes here may have been the effect of butanol, leading to further ink bleeding. With the exception of set 6, the observed adverse effect decreased with increasing distance from butanol sources, but did not affect the resulting ∆E values (see Table 3).

Fig. 8 Overall colour change ∆E of all sets of Test 2. Exposure time: 48/72 hours. Distance from butanol sources: 14/26/38 cm.

Table 3 Comparative macro- and microimages of Test 2 samples made using the VSC (upper part of images) and the 3D digital microscope. Comparison of pre-exposure samplse with post-exposure results. For the demostration, the exposure time is 48/72 hours and the distance from butanol sources is 14/38 cm. (A. Kazanskii, National library of the Czech Republic).

Results of Test 3:

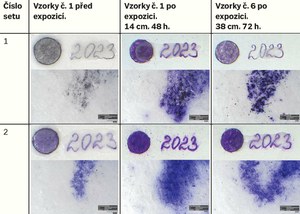

The measurement of colour change in this test was complicated by the presence of a thick, gradually evaporating layer of cyclododecane on the surface of the recording media. This factor affected the reproducibility of the measurements and the resulting standard deviation values. The average values of ∆E in Test 3 differed only to a minimum extent from the results of Test 1, which indicates that under such conditions cyclododecane fixation can be considered as a less effective protection measure against the undesirable effects of disinfection (see Fig. 8).

As a result of exposure, all silver ink pencils underwent significant and clearly visible colour changes, with ∆E values exceeding 15. For the Mephisto purple ink pencil (set 2), the ∆E value reached approximately 8. The inks were bleeding regardless of the distance of the samples from the butanol sources and the exposure time. These results are evident in macro- and micro-photographic documentation (see Table 4). Minor colour changes were observed in coloured ink pencils (sets 7-8), with ∆E values ranging from 5 to 10. They also did not bleed during the test.

For stamp ink from set 9, there was still some ink bleeding during the exposure. For liquid fountain pen ink and stamp inks (sets 10-12), like in Test 1, the lowest degree of colour changes in the range ∆E from 2 to 8 and minimum degree of bleeding were observed (see Table 4).

Fig. 9 Overall colour change E of all sets of Test 3. Exposure time: 48/72 hours. Distance from butanol sources: 14/26/38 cm.

Table 4 Comparative macro- and microimages of Test 3 samples made using the VSC (upper part of the images) and the 3D digital microscope. Comparison of pre-exposure samples with post-exposure results. For the demonstration, the exposure time is 48/72 hours and the distance from butanol sources is 14/38 cm. (A. Kazanskii, National Library of the Czech Republic)

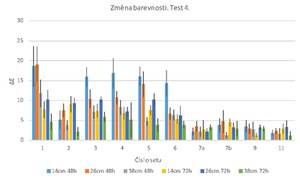

Results of Test 4:

Ink fixation in Test 4 using 1.2% Mesitol NBS solution and 6% Rewin EL solution can be considered as a partially effective method to protect against the adverse effects of disinfection. Unlike in Test 1, in which the inks were not fixed, the resulting average ∆E values for silver ink pencils (sets 1-6) in Test 4 were lower and dependent on the distance from the butanol sources. With an exposure time of 72 hours and a distance of 38 cm, the values for the silver ink pencils were below ∆E = 5.8, and below ∆E = 2.2 for the purple ink pencil (set 2). For the coloured ink pencil (set 7), there was an even smaller colour change, where the average value of ∆E reached 3. Unlike in Test 1, none of the ink pencils underwent bleeding. In the case of the stamp ink (set 9), it did not bleed during the exposure only at a greater distance from the butanol sources (28–38 cm). The stamp ink (set 11) measured showed lower to medium colour changes ∆E in the range from 1.1 to 3 (see Fig. 9).

For the ink pencil (set 8), fountain pen ink (set 10), and stamp ink (set 12) sets, there was considerable bleeding during fixation by immersion in the Revin or Mesitol NBS solutions, which made it impossible to achieve reproducible results. In sets 6, 7 and 11, a slight but still undesirable bleeding was observed during fixation, as can be seen in the photographic documentation (see Table 5). Such reactions of the recording media constitute a fundamental flaw in the method. Based on the tests conducted, it has been shown again that before each application of solutions of the Mesitol NBS and Rewin fixatives, it is necessary to conduct combined solubility tests and, if necessary, use solutions with different concentrations adapted to specific types of recording media (Bredereck 1988).

Fig. 10 Overall colour change ∆E of all sets of Test 4. Exposure time: 48/72 hours. Distance from butanol sources: 14/26/38 cm.

Table 5 Comparative macro- and microimages of Test 4 samples made using the VSC (upper part of the images) and the 3D digital microscope. Comparison of pre-exposure samples with post-exposure results. For the demonstration, the exposure time is 48/72 hours and the distance from butanol sources is 14/38 cm. (A. Kazanskii, National Library of the Czech Republic)

Conclusion

Silver ink pencils proved to be the most problematic group of recording media that were tested. Under normal disinfection conditions, such inks significantly change colour and bleed, regardless of the distance from the source of butanol. Where silver ink pencils are water-activated before writing, a greater distance from the butanol source may reduce the rate of their bleeding and prevent further significant discolouration. A combination of Mesitol NBS and Revin EL solutions can be used to fix silver ink pencils, which reduces possible discolouration and minimises bleeding of recording media during disinfection. However, it is essential to carefully select the concentration of the solution and the method of its application. It is also recommended to perform a solubility test to avoid damage to the record. Purple ink pencils showed similar behaviour to silver ink pencils during the test, but the colour changes were less pronounced. Even smaller colour changes were observed with coloured ink pencils, such as red and blue. Such recording media showed moderate colour changes with no signs of bleeding.

Stamp inks and ink for fountain pens demonstrated significantly higher stability compared to silver ink pencils in the standard disinfection procedure. Such recording media showed a significantly lower colour change and most of them did not bleed. On the other hand, it has been shown that the fixation of these recording media using a combination of Mesitol NBS and Revin EL solutions bears a considerable risk due to the high probability of their dissolution during fixation. The ink for NORIS stamps (set 9) deserves special attention. Unlike other stamp inks, it showed significant bleeding during disinfection, with the degree of bleeding depending on the distance from the butanol source in the chamber.

The fixation method using cyclododecane proved to be ineffective in preventing changes in recording media during standard disinfection.

The tests conducted are only a simulation of the actual disinfection process, where other factors affect the activation of dyes in older records. Real-life aged records may have different environmental interaction characteristics and the dyes contained in them may be in a different chemical state as a result of natural aging. Therefore, further research will continue to test the interaction of naturally aged records with butanol vapours.

Bibliography:

BACÍLKOVÁ, Bronislava, 2015. Studium účinků par butanolu a jiných alkoholů na plísně. Online. Praha: Národní archiv. Available at https://old2.nacr.cz/wp-content/uploads/2015/11/butanol.pdf. [accessed on 2024-01-11].

BREDERECK, Karl a SILLER-GRABENSTEIN, Almut, 1988. Fixing of ink dyes as a basis for restoration and preservation techniques in archives. Restaurator. Issue 9, pp. 113-135.

ĎUROVIČ, Michal, 2022. Restaurování a konzervování archiválií a knih. Praha: Paseka. ISBN 80-7185-383-6.

ĎUROVIČ, Michal; DENDEROVÁ, Michaela; MATUŠÍK, Jan a STRAKA, Roman, 1999. Fixace novodobých psacích prostředků syntetickými polymery – studium odstranitelnosti a fyzikálně-chemických vlastností některých vybraných fixačních prostředků. In: X. seminář restaurátorů a historiků: Referáty. Litomyšl, 24.–27. září 1997. Praha: Pobočka ČIS při Státním ústředním archivu v Praze, p. 248.

KARBOWSKA-BERENT, Joanna, 2014. Dezynfekcja chemiczna zabytków na podłożu papierowym – skuteczność i zagrożenia. PDF. Toruň: Wydawnictwo Naukowe Uniwersytetu Mikołaja Kopernika. ISBN 978-83-231-3088-8.

KARBOWSKA-BERENT, J. et al., 2018. The initial disinfection of paper-based historic items – Observations on some simple suggested methods. Online. International Biodeterioration & Biodegradation. Vol. 131, pp. 60-66. ISSN 0964-8305. Available at https://doi.org/10.1016/j.ibiod.2017.03.001 [accessed on 2024-01-11].

MAKOVÁ, Alena a HAFKOVÁ, Zuzana, 2019. Záznamové prostriedky a možnosti ich fixácie pre vodné konzervačné procesy. Bratislava: MV SROV. p. 6-16. ISBN 978-80-971767-5-4.

ORLITA, Alois, 1991. Nový systém devitalizace plísní na historických písemnostech. In: Sborník 8. semináře restaurátorů a historiků, Železná Ruda – Špičák. Praha: Státní ústřední archiv v Praze. pp. 258-267.

PAULUSOVÁ, Hana, 2003. Využití cyklododekanu pro přechodnou fixaci vodorozpustných barviv. In: XI. seminář restaurátorů a historiků: Referáty, Litoměřice, 13.–16. 9. 2000. Praha: Státní ústřední archiv v Praze. pp. 250-255.

ZMEŠKAL, Oldřich; ČEPPAN, Michal a DZIK, Petr, 2002. Barevné prostory a správa barev. Online. Vysoké učení technické v Brně. Available at http://imagesci.fch.vut.cz/download/stud06_rozn02.pdf. [accessed on 2024-01-11].

KAZANSKII, Andrei; ZEMBJAKOVÁ, Rebeka a NEORALOVÁ, Jitka. Stálost inkoustových tužek a razítek v parách butanolu při standardním postupu dezinfekce. Knihovna: knihovnická revue. 2024, Vol. 35, Issue 2, p…ISSN 1802-3252.

]]>KEYWORDS: Bezno, Březno, Skalsko, church music, thematic catalogue, collection of sheet music, composers, teachers, choir directors, 18th century, 19th century

]]>KEYWORDS: education, convents, monasteries, Middle Ages, manuscripts, incunabula, Benedictines, Domi- nicans, Poor Clares, Premonstratensians, reading, breviaries, psalters, Bible, Kingdom of Bohemia, Bohemia, women, book culture, vernacularization, German, Czech

]]>Keywords: linked data, BIBFRAME, RDF, IFLA LRM, metadata, cataloguing, MARC format, library cooperation

This study was created on the basis of institutional support for the long-term conceptual development of the National Library of the Czech Republic as a research organization provided by the Ministry of Culture of the Czech Republic (DKRVO 2024-2028), Area 11: Linked Open Data.

Introduction

In December 2006, the Library of Congress (Washington, D.C.) established the Working Group on the Future for Bibliographic Control, led by José-Marie Griffiths (University of North Carolina, Chapel Hill). One of the Group's task was to collect new knowledge on the impact of standards for the processing of bibliographic and authority records and cataloguing procedures on the management of information resources in libraries and in their access to them in the new information and technological environment (Library of Congress, 2006).

In January 2008, the Group published an important report On the Record (Library of Congress. Working Group on the Future of Bibliographic Control, 2008). In chapter 3.1 Web as Infrastructure, the report stated that the MARC format is built on forty-year-old programming techniques and is not in line with the present day’s programming styles. The MARC format is used exclusively in the library environment and is not compatible with other systems working with bibliographic data. A broader use of bibliographic data requires a format that will accept and distinguish metadata created by experts, generated automatically, and created by users, including annotations (reviews, comments), and data on the use of the source.

Based on the recommendations formulated in this report, on October 31st, 2011, the Library of Congress announced the Bibliographic Framework for the Digital Age initiative, or BIBFRAME (Library of Congress, 2011).[1] The announcement of the BIBFRAME initiative was one of the important impulses in the development of new bibliographic data formats based on the RDF[2] (Resource Description Framework) model. One of the arguments for choosing the RDF model was the fact that it is a method recommended by the World Wide Web Consortium (W3C) for conceptual description or data modeling in the Web environment.

The use of RDF and other techniques supported by the W3C consortium generally allows for better integration of data from library systems and other cultural heritage systems in the Internet environment with the aim of advanced and broader user access to information (Library of Congress, 2011). One of the main results of this initiative is the creation of an ecosystem of models, ontologies and other tools for the creation and management of linked data with the same name BIBFRAME, which is gradually being implemented in selected library databases around the world.

In addition to the BIBFRAME format, another significant achievement in this area is the development of an RDF-based RDA ontology based on the RDA cataloguing rules: Resource Description and Access – Official version . RDA Official together with BIBFRAME represent two initiatives that influence one another and are also gradually complementary, which must be seen as successors to the MARC 21 format and the RDA cataloguing rules in the Original version (currently used in the Czech Republic) for bibliographic and authority data.

How will we reaspond to this development in the Czech Republic? Is it possible to gradually change the data formats in libraries in our environment? What would be neeeded to prepare for such a change to occur?

The Goal of the Study and the Methods Used

The goal of the study is to analyse the possibilities of implementation and use of linked data formats in the environment of Czech libraries. Based on the research and analysis of sources from other countries, we will introduce the topic of linked data formats and the possible advantages of their implementation. We will evaluate the current state of processing and cooperation in the field of bibliographic and authority data in the Czech Republic with a focus on preparing data for possible conversion into linked data formats. For the research, we used mainly the analysis of bibliographic and authority data of the Czech National Bibliography. We describe the processes of processing bibliographic and authority data in the Czech Republic. We identify areas for improving and optimizing the exchange and sharing of data in the library network, as well as for cooperation among libraries and surrounding systems, especially the publishing sector. We present the advantages of implementing linked data formats for collaboration in libraries and among libraries and surrounding systems in the Web environment. The study is intended to contribute an outline of a solution for the implementation of an ecosystem of linked open data in the Czech Republic.

From MARC to Linked Data

Bibliographic and authority data processing in the Czech Republic is shaped by a number of standards. MARC 21 has been used as the main exchange format since 2004, with the RDA Cataloguing Rules (Original version) being used in combination with the MARC 21 format starting from 2015. These international standards are supplemented by methodologies and interpretations published on the National Library of the Czech Republic’s website devoted to cataloguing policy. However, for over almost two centuries, a number of other standards and methods have been used in our territory. Many collections have historically been processed on cataloguing cards according to various standards. The cards only began to be converted into a machine-readable form in the 1990s onwards as part of retrospective conversion (machine reading of cards) or retrospective cataloguing (with a document in hand) projects. As a result, we can really find a mixture of many different approaches and rules in today's databases.

Since we have adopted MARC 21 as a standard in the Czech Republic in 2004, it might seem that it has been a relatively modern format. However, the opposite is true. MARC 21 closely follows its predecessors, whose creation dates back to the 1960s. Therefore, the format has gone through almost sixty years of history, and as discussed in the On the Record report (Library of Congress. Working Group on the Future of Bibliographic Control, 2008), it was created using relatively old programming and data management techniques, from today's point of view. The MARC 21 format is closely related to Anglo-American cataloguing procedures, its form was influenced by the form of a paper cataloguing card. It is still necessary to use punctuation according to the ISBD standard (International Standard Bibliographic Description) for the data structure in some fields and subfields. The overall structure is very outdated and inflexible. It cannot respond well to the present day’s data models (e.g., IFLA Library Reference Model, hereinafter referred to as IFLA LRM, Riva, 2017). The MARC 21 format is often used in library systems not only as an interchangeable data format. Library systems often offer forms for cataloguing based on individual fields of the MARC format, the fields are marked with appropriate tags, the librarian writes down the indicators, marks the individual subfields, and must use the prescribed punctuation.

MARC 21 (or other MARC formats) is used exclusively by the library community and is essentially incomprehensible to other systems used in memory institutions (archives, museums) or in publishing and book market systems. For the above reasons, as well as for the benefit of better communication of data within the broader environment of libraries on the Internet, and especially for easier communication of data in the Web environment, it would be more appropriate to abandon the MARC formats and start using formats based on more general standards also used outside library sector.

Libraries around the world have worked with MARC formats and related technologies (e.g., Z39.50) for a really long time, using them to share hundreds of millions of bibliographic and authority records. "Everything from system integration to all cataloguing work is built on the MARC 21 format," as the National Library of Sweden’s experts state (2019). It is therefore very difficult to put an end to such practice and start using completely new procedures and techniques. In order to evaluate the possibilities of converting existing bibliographic and authority data into linked data formats, it is necessary to examine in more detail the processes of creating bibliographic and authority records in the Czech Republic and especially the cooperation in their creation.

First of all, we will explain in more detail how to work with linked data in libraries abroad and what impact the implementation of linked data formats could have on the entire data processing process in libraries.

The Topic of Linked Data in Libraries

The use of linked data in library systems is not a new topic in the Czech Republic. Already in 2010, Jindřich Mynarz and Jan Zemánek (Mynarz and Zemánek, 2010) published a paper titled Introduction to Linked Data in the Knihovna plus, where they characterize the principles of linked data formats and their (as they themselves state) technological profile. A large part of the paper is devoted to the use of linked data in the library sector, as an example from the Czech Republic, they mention the conversion of the Polythematic Structured Entry Index to the SKOS format (National Technical Library, 2016–2024).

In the following years, papers in Czech mentioning these topics would follow, by Barbora Drobíková (2013, 2014), Klára Rösslerová (2016, 2017a, 2017b, 2018), and Helena Kučerová (2018, 2019). An important achievement in this area are the projects of the National Library of the Czech Republic related to the interconnection of the TDKIV terminology database and the name authority database with Wikidata, which are described mainly in the works of Linda Jansová (2019, 2020) and Zdeněk Bartl (2019). The possibilities of using linked data in the Knihovny.cz database are described by Michal Denár and Josef Moravec (2023).

For more information about linked data in libraries, you can use the links page on the Information for Libraries portal (National Library of the Czech Republic, 2024). In 2023, the Cataloguing and Linked Data webinar was organised by the Union of Librarians and Information Workers of the Czech Republic (SKIP ČR). Videos from the webinar are available on the SKIP ČR website.

A large number of papers have been published and written on the topic of linked data in libraries abroad in the last twenty years. A systematic review of published resources on the topic of linked data in libraries was presented by a team led by Panorea Gaitanou in the Journal of Information Science (Gaitanou, 2024). The paper summarizes works published between 2008 and 2019. It deals exclusively with articles published in expert periodicals written in English, book chapters, and papers in proceedings. It does not include any theses or dissertations, white papers, or similar sources.

The results of the systematic review are divided into several chapters:

- Linked Data Implementation in the Cultural Heritage Domain, including subchapters: Linked Data Implementation in Libraries and Bibliographic Control, Linked Data Implementation in Specific Projects, Specific Approaches to Linked Data and Methodologies;

- Description of Specific Bibliographic Models, including subchapters: FRBR , BIBFRAME, and RDA models;

- Interoperability Issues: Mapping and Crosswalks, including subchapter Mapping and Crosswalks Using the BIBFRAME model;

- Other Issues, including subchapters: KOS (Knowledge Organization Systems), Linked Data and Metadata Quality, Privacy in Libraries, Librarian’s Position o in the Linked Data Environment, and Educational Material.

The authors processed a total of 239 sources. The above chapter and subchapter titles clearly show which topics were most often covered by the published sources in the period 2008–2019. These are mainly topics related to bibliographic and authority control, the IFLA LRM and BIBFRAME models, interoperability of metadata in library systems with an emphasis on the transition to new linked data formats. The systematic review confirmed the fact, as the authors themselves state, "that linked data are becoming the mainstream trend in library cataloguing especially in the major libraries around the world as well as the most important research projects initiated by libraries in an attempt to make bibliographic data and collections more discoverable in the WWW and their users, more meaningful as well as more reusable " (Gaitanou, 2024, p. 218). However, thanks to such a detailed overview, it is also clear that there are topics that many authors have not yet touched on in their research work from the period 2008–2019. Gaitanou and colleagues state that these are mainly topics related to metadata quality control, lack of rules for handling (meta)data and sharing (meta)data in RDF format, and other topics.

From more recent works, we would like to mention Sophie Zapounidou's dissertation from 2020 entitled Study of Library Data Models in the Semantic Web Environment, in which she compares FRBR, BIBFRAME, RDA and EDM models . Julie Unterstrasser's 2023 thesis is also very inspiring, showing how the transition to the linked data format has affected the work and practice of librarians at the National Library of Sweden. The author emphasizes the significant shift in the work of cataloguers "from cataloguing to catalinking", or from cataloguing to creating links, as a fundamental change in the paradigm of bibliographic and authoritative processing of information resources in libraries. The aspect of the necessary further education of librarians in the field of linked data is also important.

The importance of the topic of linked data is shown not only by the articles published or dissertations, but also by specific ongoing projects to implement linked data formats into bibliographic control processes in many large libraries around the world. Since 2017, the BIBFRAME Workshop in Europe (https://www.bfwe.eu/) has been held annually to bring updates on the state of linked data formats’ implementation from European countries with a large number of current papers by prominent authors in this field. The studies and conference papers by Ian Bigelow with co-authors from the University of Alberta (e.g., 2020, 2022, 2023), Tiziana Possemato with co-authors from the Casalini Libri Library (e.g., 2020, 2022, 2023), which is the organizer of the workshop, or Stanford University’s Nancy Lorimer (e.g., 2022, 2023) or Library of Congress’s Sally McCallum (e.g. 2022, 2023), and many other experts are inspiring.

The number of projects already implemented for the conversion of bibliographic and authority data into linked open data formats is also shown in the Proposal for the Publication of Linked Open Bibliographic Data, a 2023 study by F. A. de Jesus and F. F. de Castro, who identified a total of 58 projects from around the world, projects of national or university and specialized libraries and networks in Spain, Finland, Sweden, Germany, Hungary, and, especially, the United States of America.

The need to switch to linked data is also shown by the 3R Project, which aimed to completely redesign the RDA rules in the Original version to the RDA in the Official version. The RDA Official includes the RDA Registry as its integral part – ontologies for the RDA/RDF linked data format. The aim was also to completely redesign the RDA Toolkit, individual instructions and paragraphs with regard to the use of rules in combination with linked data formats (e.g. Alemu, 2022, p. 197; Oliver, 2021). It is assumed that from 2026 onwards, RDA will only be used as rules in the Official version, and the Original version will be cancelled.

Entity-Oriented Cataloguing: How Bibliographic and Authority Data Management Can Change

As early as 1995, Michael Heaney published an important study dealing with object-oriented cataloguing (Heaney, 1995). The AACR2R cataloguing rules were the context of his study. Heaney called for greater emphasis on the precise identification of the different types of authority that can be interlinked. Networks of linked authorities would then represent individual records. It can be said that such a visionary work can now be completed in a certain way. In the context of linked data formats, new terms emerge, such as identity or entity management or entity-based cataloguing (e.g., Durocher et al., 2020; Stalberg et al., 2020; MacEwan, 2022; Zapounidou et al., 2024).

Linked data technology is based on precise identification of structured data that represents entity instances and the relationships between them using unique URIs (entity instances, relationships, and their corresponding URIs are registered in controlled dictionaries and ontologies). Structured data representing certain types of entities and controlled dictionaries, as Zapounidou et al. (2024) wrote, are the very heart of the cataloguing process known as authority control. At this point, the authority control processes, as we know them from library databases, coincide with linked data management technologies. However, managing linked data requires highly automated processing based on URIs. We often rely on human interpretation and the use of textual chains in authority control processes, e.g., at the level of the selection of authorized input elements according to various cultural and linguistic conventions (see Zapounidou et al., 2024 for more details).

If we discuss entities, then linked data formats, whether we mean, e.g., BIBFRAME or formats based on RDA/RDF and IFLA LRM, will require management of all entities that occur in bibliographic databases. This concerns not only entities that are in the area of interest of authority control today, such as names (personal, corporate), titles of works, geographical names, or subjects. In the language of the IFLA-LRM model (Riva et al., 2017), there are also entities related to expression, manifestation, item, agent, nomen, or time span. For instances of all entities, it is necessary to manage value vocabularies with unique URIs. Document identification (today represented by a bibliographic record) will then be formed as a network of mutual relationships among entity instances (individual occurrences of entities, e.g., a specific person, a specific place), and both relationships and individual entity instances will be represented by URIs (in reference to J. Unterstrasser (2023) above: "from cataloguing to catalinking".)

Advantages of Linked Data Implementation Not Only for Cataloguing

So far, we have only dealt with the implementation of linked data formats in the field of library data management or cataloguing. However, the advantage of deploying linked data formats is best reflected in the interconnection of library databases and external resources in the Web environment, in better visibility of libraries on the Web, and thus in better services to library users. MARC formats are not easy to understand for other systems outside the library community. Linked data formats can enable better interoperability of data across communities – the publishing environment, the GLAM sector – galleries, libraries, archives, museums. Publishing data in linked data formats will make it easier for web search engines to index data from library databases and make the data visible in normal web searches. Such data will enable the interconnection of library databases with external sources of information, such as GeoNames or Wikidata, and enriching the user interfaces of library catalogues and discovery systems from external sources.

The first outputs of data enrichment projects from external sources can also be tested in the Czech Republic, e.g., in the Knihovny.cz portal, as described by Denár and Moravec (2023). The NKlink tool is another example of a good practice (Jonáčková & Dostál, 2020), which enriches authority records with external identifiers, including Wikidata identifiers. The ability to enrich library data with data from external sources is one of the most common arguments for the deployment of linked data formats in libraries and the replacement of obsolete MARC formats. Because the introduction of linked data will help to work with data much more efficiently, it can offer users new search options that are currently only available in complicated ways or are not available at all.

Situation in the Czech Republic

Cooperative Production of Bibliographic Records

The cooperative production of bibliographic records in the Czech Republic is built on several pillars. The main pillar comprises centrally defined standards, such as cataloguing rules (in the Czech Republic, these now include RDA: Resource Description and Access, Original version) and the exchange format (MARC 21 bibliographic and authority format). Other important pillars comprise building of the Czech National Bibliography (including a set of national authorities) and producing union catalogues, to which Czech libraries can contribute new bibliographic records and from which libraries can download records for use in local databases.

The Czech National Bibliography (CNB)

Under the Act No. 257/2001 Sb. (hereinafter referred to as the Library Act), Section 9(2b), the National Library of the Czech Republic "prepares the national bibliography and ensures the coordination of the national bibliographic system". According to the subsequent sections of the same Act, all regional libraries (Section 11(2a)) and specialized libraries (Section 13(2a)) collaborate on this task.

The basis of the CNB consists of libraries within the so-called "cluster": the National Library of the Czech Republic, the Moravian Library in Brno (also in the role of the regional library of the South Moravian Region), and the Olomouc Research Library (also in the role of the regional library of the Olomouc Region). These libraries work within a shared database. In addition, the records of regional libraries are received by the CNB through the Union Catalogue of the Czech Republic. Bibliographic records for fiction are contributed by the Municipal Library in Prague as part of the Central project (Lichtenbergová, 2023). Development of the CNB is one of the so-called national functions of the National Library of the Czech Republic. The CNB database is a very important and irreplaceable source of information on published cultural heritage in the Czech Republic.

The CNB currently makes 1.2 million records available. The contribution to the creation of the bibliography can be illustrated by the statistics of the originators of records generated according to the new RDA rules in force since 2015. Since that year, 209 thousand records have been created according to the RDA, including:

- 64 thousand records by the National Library of the Czech Republic – location code ABA001

- 19 thousand records by the Moravian Library in Brno – location code BOA001

- 18 thousand records by the Olomouc Research Library – location code OLA001

Together, these three libraries have created about a half of the bibliographic records at the CNB since 2015. Other regional and other specialized libraries participated in creating another hundred thousand records, and several records were even originate from a foreign library. The Municipal Library in Prague contributed with almost 13 thousand records16.

Union Databases

The Union Catalogue of the Czech Republic, a centralized heterogeneous union catalogue, contains 8.4 million bibliographic records, including the CNB records. It has been available electronically since 1995 (Svobodová, 2003). Currently, 530 libraries collaborate on its development (Union Catalogue of the Czech Republic, 2023). According to the Act No. 257/2001 Sb., Section 9 (2a)), the Union Catalogue of the Czech Republic is produced by the National Library of the Czech Republic. In addition to the CNB, the building of the Union Catalogue is also part of the so-called national functions of the National Library of the Czech Republic.

Only monograph records can be contributed to the Union Catalogue via the OAI-PMH protocol. For serial resources, it is possible to update subscriptions of individual titles using an online form. The frequency of contributions varies. When data is entered into the Union Catalogue, multiplicity and quality of records are checked. In the event that an entry already exists in the Union Catalogue of the Czech Republic (hereinafter referred to as the SKC), only the location code of the new (sending) library is registered and a link is created to its local catalogue. For a new record, the entire record is inserted into the SKC, including the link to a local base. Where a record does not have the desired quality, it is returned to the sending library for correction. Some libraries do not provide their entire collections for harvesting in the SKC database, but only a certain selected part, e.g., by document type.

We should mention the Knihovny.cz portal as another representative example of union databases, which now includes 100 libraries. In addition, the portal provides access to other resources that it harvests, including the Union Catalogue of the Czech Republic (Knihovny.cz, 2024b). Using the Z39.50 protocol, libraries can have their individual profiles defined, which allow them to search and download records from various Czech and foreign sources (Knihovny.cz, 2024a). The advantage of the Knihovny.cz database is that it often receives records of a larger part of the collections from libraries. It is therefore a relatively interesting source of, for example, audiobook recordings or special documents such as talking books or board games.

Given the role of the Knihovny.cz portal, the titles here do not have a truly collective record (SKC). For the purposes of searching, the system works with each record that it has received from all libraries that hold the title. The records are interconnected using duplicate control (Kurfürstová et al., 2023). A different record is used at different times, for example, based on a home library of a logged-in user, or the selection of a library when searching. All records in the Knihovny.cz index are then offered through Z39.50. Their quality varies considerably. At the same time, however, the portal often provides records that are not available in other sources.

Current Collaboration Workflow

In addition to the indisputable advantages that result from the development of the CNB and union databases for cooperative cataloguing, it is also possible to observe certain weaknesses that have accompanied the existing models of cooperation throughout the existence of library databases, and not only in the electronic environment. We could summarize them using two concepts of multiplicity and asynchronicity. This is reflected in practice, for example, in the following situation: a library requests a complete record of a title that has just been delivered, for example in the Union Catalogue of the Czech Republic. If the library does not find it there, then it will have to create such a record. This can happen in the span of hours or days in a number of libraries. If a library cooperates with the SKC, the record created by it will be harvested via the OAI-PMH protocol, sometimes with a weekly (or even monthly) periodicity. In the SKC, multiplicate records of various quality are created, which must be deduplicated in a complex way. Moreover, it is not easy to apply partial changes to deduplicated records based on records sent from libraries. At a certain point, the system only registers that the title exists in the given library.

Thus, part of the work generated by this parallel activity is not relevant to the cooperative system. In individual libraries, records of varying processing complexity and quality are created in such manner. This does not mean that the system is poorly designed. All negative properties are based on the multi-speed dynamics of the distribution of records in the system. This is partly due to the fact that harvesting records from hundreds of libraries is time-consuming, as is the subsequent deduplication and further processing on the part of the union databases. The MARC format also plays a role, as its structure often does not allow for effective qualitative evaluation of individual records with regard to the creation of a high-quality union record. Algorithm-based evaluation weighs are used, but these tend to work with formal record checks. This is also due to the fact that the amount of information in the MARC structure is recorded only as text, without placing it in a broader context or assigning a specific meaning to the information. The whole process is made even more complex by the complicated syntax and rules for creating records, which in some respects allow different approaches to description or notation.

As mentioned above, the CNB is produced mainly by the three major libraries in the cluster. These libraries obtain published documents in the Czech Republic mainly in the form of legal deposits. This in itself often brings significant delays in the processing of documents that have been available on the market for some time. In reality, regional or specialized libraries are able to obtain these documents earlier than the "cluster" libraries, and are forced to be the first to process the documents. Given the requirements of their users, they often cannot wait for a high-quality record to be created at the CNB or for the record to be downloaded into the union databases. Although the CNB forms the basis for the SKC, especially in the area of Czech production, it may happen that the records in the CNB differ from the records of the same titles. They contain some practical information that will be appreciated by both lending service workers and readers. An example can be an indication of pertinence to a cycle or high-quality annotation.

The biggest problem of the current cooperative system is the considerable number of duplicates and multiplicity of records. These are created by the fact that records from local databases are received in central union databases at different times. This is mainly due to the acquisition policy of individual libraries in connection with distribution. In addition, libraries send their records to union databases at various frequencies. As regards fiction, the Central project managed by the Municipal Library in Prague (Projekt Central, 2024b), has significantly helped with the speed of cataloguing new titles. The project purchases fiction 3 times a week and process around 16 titles a day. They state that they send new publication records to the Union Catalogue 3 to 7 days of publication. However, asynchronicity occurs here as well. According to statistics, the project will cover approximately 80% of fiction published in our territory by the largest Czech publishing houses (Projekt Central, 2024a).

Design/Outline of a Vision for Future Solutions

In the cooperation model, where the recording is created only after the document is published and placed on the market, these weaknesses cannot be avoided. This situation could be addressed in many cases by creating a record before the document is issued or, at the latest, in parallel with its launch.

An important player could be the newly built joint database of libraries and publishers called the Register of Czech Books, sometimes also called ReČeK for short (Maixnerová, 2023). Thanks to it, libraries could obtain basic metadata before the title is released. The project could offer a usable data alternative to "cataloguing in a book", which has only partially spread in our environment, rather in professional literature. Thanks to the direct recording of values by the publisher, the record could be more complete than the CIP and ISN (reported books) databases currently provide. In addition, metadata will be available for download before the publication of the book in the library network (e.g. for acquisition purposes), starting with the creation of a record and in one place. The persistent entity identifier will remain the same and public, but the metadata will be further added during the cataloguing process. Until the title is actually published, the data can be edited to react, for example, to changes in the title or the number of pages.

If each such title also received a unique identifier (before the ISBN and the CNB), it would be possible to create records referring to this identifier. If a central metadata repository is also created in the National Library, it would be technically feasible to distribute all changes in records to the entire ecosystem. This would create a single record that would be distributed by the system to local databases. This solution could prevent the creation of different versions of records between the central database and the local record in the connected library. At the same time, the central record could be complemented and improved in a cooperative way. Any change would soon be reflected in all local copies. In addition, it would be possible to insert certain data only for local use, or, conversely, to hide some information for use in a specific library.

National Authority Files

Today, we can no longer imagine high-quality bibliographic records without access point based on authority files. Authority files are an important building block of bibliographic databases, allowing to uniquely identify specific instances of entities, link bibliographic records, and playing a significant role in searching databases and metadata. At present, library databases mainly use authority records for persons (personal names), corporations, subject terms/descriptors, formal descriptors, geographical names, titles of works and expressions (in the form of authority records for anonymous works, or in the authority records of the name-title type for works listed under the author's name). However, the representation of the authoritative forms of the above-mentioned types of entities is far from being one hundred percent in bibliographic records.

As an example, the CNB database contains 866 thousand records with the field 100 (Main entry – personal name). There are 797 thousand occurrences of the subfield 7 that contains an authority record’s identifier. Of the 731 thousand occurrences of the 700 field (Added entry – personal name), the subfield 7 with an authority record identifier has 628 thousand occurrences.

The CNB database can be considered a very well-managed database with the best quality of cataloguing possible. Personal names are the most common and most commonly produced authority records in general. Nevertheless, even in the CNB’s fields 100 or 700 for personal names, not all forms of names are based on authority files. Such a situation is quite logical, because the CNB contains different layers of records from different periods.

Table 1 Proportion of identifiers completed for CNB personal and corporate authority records

In addition to the data that can currently be linked to authority records, there is also data in bibliographic records for which it would be appropriate to create authority records or controlled vocabularies for the sake of unambiguity of search and linking, but this is not done as a result of the cataloguing traditions. These concerns mainly data on publishers (the issue was addressed, for example, by Drobíková et al., 2016), places of publication or production of documents. We can also include the issues of recording time/date or time span (the time span occurs in many fields of bibliographic and authority records – e.g., date of publication, field 264, 008; date of record creation, field 008/00-06, date of record update, 005, dates related to the document’s content given in the subject fields – 648, 045, for authority records, these concerns data associated with individuals, corporations, with the time-limited existence of administrative units, or with the creation or updating of a work, or its expression, the creation of a recording, etc.).

Collaboration on Authority Records

In the case of authority records, cooperative creation in the Czech Republic is used mainly for authority records of personal, corporate and geographical names. The cooperative creation of other types of authority records (subject terms, title authority records) is limited to the cooperation among the relevant departments of the National Library of the Czech Republic and cluster libraries due to the more demanding administration, complexity of the structure, and dependence on the terminological systems of individual subject areas. In practice, therefore, we often encounter situations, in which libraries use non-authoritative forms of names and titles in the relevant fields (title, subject). The possibility of linking such records may be significantly limited for these reasons.

Like in the case of collaborative production of bibliographic records, there is a delay and a certain asynchronicity in the creation of authoritative records, too. Authority records for authors are usually created only after the publication of a document, generally a printed one, especially a book. To a lesser extent, article production is taken into account, and authors of articles in Czech periodicals or periodicals published in our territory are taken into account. The scientific community publishing articles abroad or the authority files for their research workplaces often do not even fall within the scope of Czech national authority files. A similar situation is common for other types of documents, such as electronic textbooks or educational videos.

Authors (and other originators) of works that are published only electronically now also remain unprocessed. Where an electronic legal deposit is received, there will be a need to create authority records even for authors who have not yet been processed. This may mean an additional burden for the National Library of the Czech Republic. In the future, the solution is to decentralize the production of authority files as much as possible among a larger number of cooperating entities that will form a single metadata base together. This system should be designed in such a manner as to allow for different levels of rights for registration and suggesting modifications. The solution must be set up so that despite the participation of a broader community, duplicate records are still minimized . The complete elimination of duplicates is unlikely to be avoided in the future, but efforts will continue to minimize their occurrence, both at the process level and through the use of technical tools and procedures.

Independently of authority record production, a unique identification of authors is being expanded using other types of identifiers around the world and in the Czech Republic, e.g., ORCID identifiers (Open Researcher and Contributor ID) for publishing in the field of science and research or the ISNI identifier (International Standard Name Identifier) . Experts from universities and research institutions may apply for the ORCID identifier before a publishing activity takes place. The identifier assigned then accompanies them in the publication of various sources (e.g., studies, textbooks, articles in electronic form), whether in the Czech Republic or abroad.

Linked Data as a Means for More Efficient Library Collaboration As we mentioned earlier, the MARC format now affects the entire process of producing and distributing metadata. Using a timeline of the process of creating one particular record in the Union Catalogue of the Czech Republic (SKC), we can demonstrate how the current model works.

We chose the work Šikmý kostel (The Leaning Church), Part Three, by Karin Lednická, as a sample record. The title was published on 15/04/2024 and its record appeared in the SKC on the next day, on 16/04/2024. Unfortunately, it contained an incorrect CNB number (field 015). Since it was a planned and anticipated title, the interest in the record from libraries was considerable. The record began to spread across libraries via the Z39.50 protocol, including the error. There is no way to count the number of downloads. Only some of the libraries that used the record are involved in the cooperative cataloguing for the SKC. This means we can only monitor the number of imports from individual libraries. Individual imports can be related to specific days and times, but these are often not the same as the date of entry into local databases.

As we mentioned above, imports to the SKC usually take place in weekly cycles, but in practice we encounter both shorter and significantly longer intervals. The error was corrected on 24/04/2024 by an SKC employee. Automatic editing of records in local databases after a change in SKC is an issue, and thus records are usually updated manually, or not updated at all. Some errors may remain in the library databases. In a sample of records, we found some records not containing any CNB numbers at all. This may be a problem in the future, as it is a key identifier that can play an important role in future migration to linked data formats, as well as help in further work with metadata. Without the identifier, unique identification is difficult to imagine .

Fig. 1 Šikmý kostel (The Leaning Church), Part Three (2024): a timeline of record changes

The transition to linked data should also include a set of strategic decisions that will help us tackle most of the problems brought about by the existing system of cooperation during the change. We should focus on two key aspects that we have identified, i. e., time asynchronicity between the need for a record and its delivery to the central repository, and the absence of a unique title identifier that is available before release. Another key feature of the new solution is an ability to distribute all changes from the repository to the participating institutions very quickly, almost in real time.

The Register of Czech Books (ReČeK) should be given an essential position in the planned system. This database should be co-created as a joint work of publishers, libraries, and the Czech National Agency for ISBN and ISMN. It will contain information about books from the moment they are included in the publishers' editorial plans. Publishers require an ability of ReČeK to provide structured information on titles in the ONIX format , which they set as a pre-condition for their cooperation. The ONIX format is successfully used internationally for the needs of publishers, distributors, and booksellers. ReČeK should then enrich information from publishers with contextual information that is important for use in libraries (linking authors to personal authority records, subject authority entries mapped to publisher's subject description, publishers' databases, etc.). The plan is that an ISBN will be assigned immediately when a record is imported to ReČeK. The system must then be able to provide the "library version" of the metadata to libraries, at least for a transitional period, in the MARC format, and, at the same time, in a structure suitable for linked data creation.

When creating a record in the ReČeK database, a record should ideally be created at the same time in the central metadata repository (with feedback to the ReČeK system). The linked data structure will allow for a better description of the interrelationships among entities. It will be necessary to rethink the ways in which certain kinds of records are created.

According to the instructions currently in place, it is possible to create either a collective record for all volumes (top-down), or records of individual parts (bottom-up) for multi-volume monographs. The instructions define some cases for choosing either of the options. Unfortunately, due to varying interpretations of the instructions, it happens that different libraries create records for the same titles using both top-down and bottom-up approaches. It is not always easy to distinguish such records from each other at first glance. Their structure can be very similar. Especially, when searching via Z39.50, it is not clearly stated anywhere in the interface whether a record concerns a single title or a series of titles. It is possible to distinguish such cases only with the help of some (basically partial) aspects of the record – for example, the year of publication can be written as a range for a series, the physical description will contain the number of volumes instead of the number of pages, and so on, for group records the code "a" should be given at position 19 in the LDR . Sometimes it happens that a collective record with the CNB number is downloaded to a local database, which is then modified using the bottom-up approach and the CNB is not removed, which then causes complications in the identification of the document.

In the linked data structure, we have significantly more options for connecting individual entities into logical units, including hierarchical links. We can easily distinguish such entities by having different classes. Links can change dynamically over time. For a better understanding, we give a specific example, again using the title Šikmý kostel (The Leaning Church).

In the CNB database, there is a so-called collective record for the Šikmý kostel (The Leaning Church) series, and the record has been assigned its own CNB identifier. The record also contains the ISBNs of the individual parts. At the same time, there may be separate records for the individual volumes in the Union Catalogue of the Czech Republic and in local library catalogues. In a description using linked data, we can link the records of individual parts to each other.

Similarly, for example, in the case of personal authority records, different identities of persons can be combined. Again, there is no need to create an umbrella record that contains various reference forms of names, pseudonyms or language variants of names. Everything can be defined by mutual relationships of entities. However, a collective entity may also exist, depending on the ontology used/designed.